Train, Fine-Tune, and Deploy AI Models at Scale

Supervised fine-tuning, reinforcement learning, benchmarking, multi-tower pipelines, and GPU deployment — all in one platform.

Pipeline builder

Build multi-tower model pipelines visuallyComing Soon

Combine multiple models in the same pipeline — time series analysis, transformer classifiers, ensemble architectures — all connected in a visual editor.

- Multi-tower architecture

- Chain specialized models together: feed time series data through an analyzer, then route outputs to a transformer for classification or generation.

- Visual pipeline editor

- Drag and drop models, configure data flows, and set up branching logic without writing orchestration code.

- Unified data routing

- Automatically handle data format conversion between models. Connect any output to any input across your pipeline.

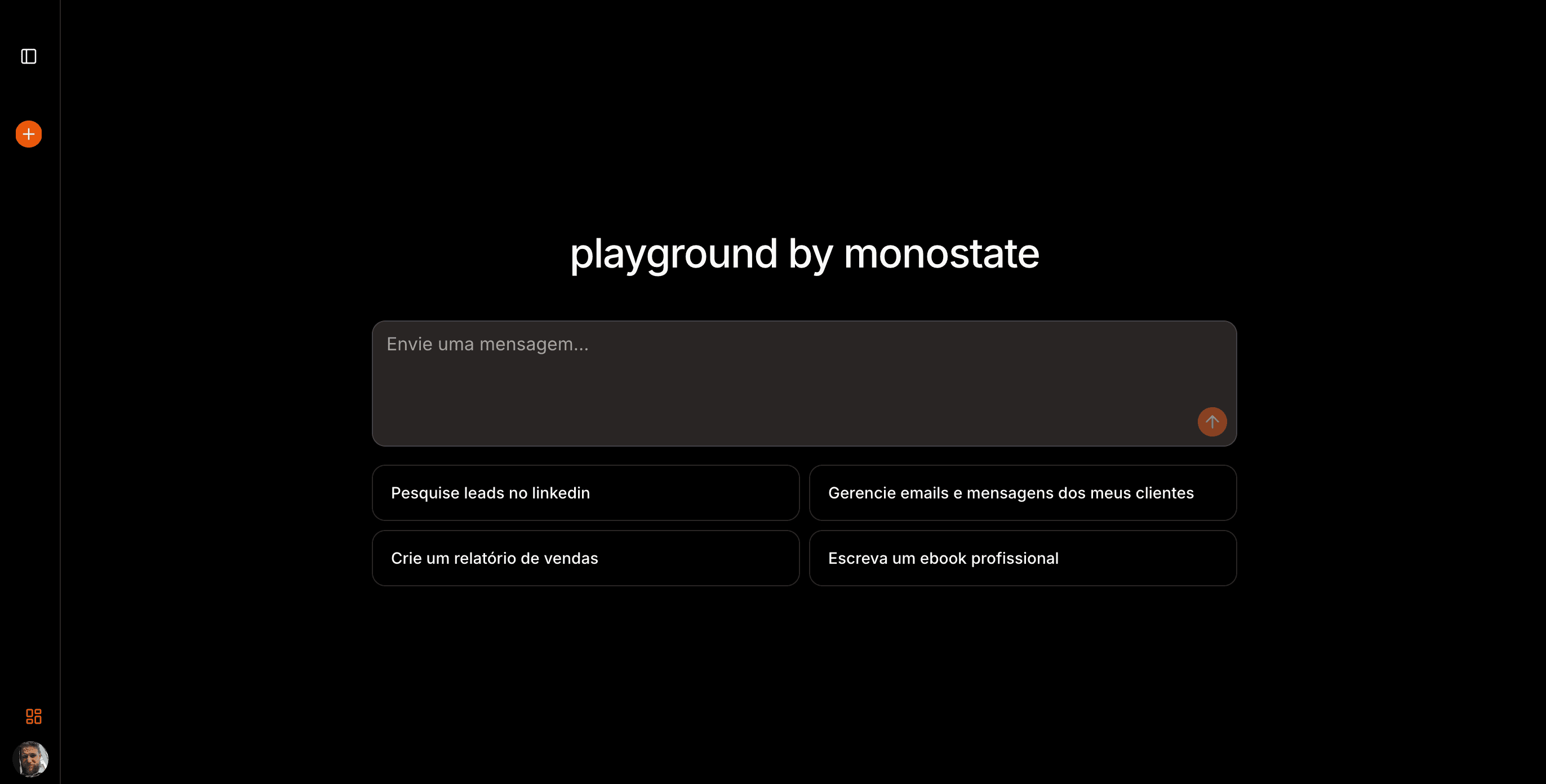

From data to deployment — one platform.

Why Monostate

Most ML teams juggle a dozen disconnected tools: one for data prep, another for training, a third for evaluation, and yet another for deployment. Monostate replaces that fragmented stack with a unified platform where every step — from dataset curation to production serving — lives in one place.

We support both commercial models (OpenAI, Anthropic, Google) and open-source models (Llama, Mistral, Phi, Qwen) on the same platform. Run benchmarks across all of them, fine-tune the open-source ones, and deploy whichever performs best — without switching tools.

Built-in GPU management means you never have to SSH into machines, write SLURM scripts, or negotiate with cloud providers. Select your hardware tier, click deploy, and Monostate handles provisioning, scaling, health checks, and cost optimization automatically.

The visual pipeline builder lets you design multi-tower architectures by connecting model blocks in a drag-and-drop canvas. Chain a time series forecaster into a transformer classifier, add an ensemble layer, and deploy the entire graph as a single endpoint.

Capabilities

Everything you need to train and deploy

No-Code Training

Launch fine-tuning jobs without writing training scripts. Configure everything through an intuitive UI.

Scale to Any GPU

From A100s to H100s, deploy on the hardware you need. Automatic provisioning and cluster management.

Fully Configurable

Customize hyperparameters, data splits, and model architectures. Full control over every aspect of your training pipeline.

Developer-first

Integrate with a few lines of code

JavaScript

// Fine-tune a model with Monostate

const job = await monostate.fineTune({

model: 'llama-3-70b',

dataset: 'my-dataset',

method: 'sft'

});Python

# Launch a training pipeline

from monostate import Pipeline

pipeline = Pipeline()

pipeline.add_model('time-series-analyzer')

pipeline.add_model('transformer-classifier')

pipeline.run(dataset='my-data', gpus=4)Real use cases

What people are training

Custom Chat Models

Training models on proprietary data to build domain-specific chatbots that understand your business context.

Creative AI Research

Fine-tuning Stable Diffusion and diffuser-type models for artistic exploration and generative research.

Specialized Agent Models

Building intent classifiers, conversation models, and voice models to power smarter, more capable AI agents.

Language Translation

Training translation models for underserved languages and dialects with custom parallel corpora.

Socionics Research

Fine-tuning models on personality type datasets to advance socionics and psychometric research.

School Matching

Helping parents find the right school by training recommendation models on private school network data.

Stop configuring. Start training.

While other platforms make you manage infrastructure, Monostate lets you focus on what matters: your models and data. Launch your first training job in minutes.